ZCT and cycles

by Leandro Lucarella on 2008- 08- 30 16:08 (updated on 2008- 08- 30 16:08)- with 0 comment(s)

There's not much to think about it (I think ;).

ZCT doesn't help in cycles reclaiming, because ZCT tracks cells with zero count, and cycles can't possibly have a zero count (even using deferred reference counting), because they are, by definition, inter-heap pointers.

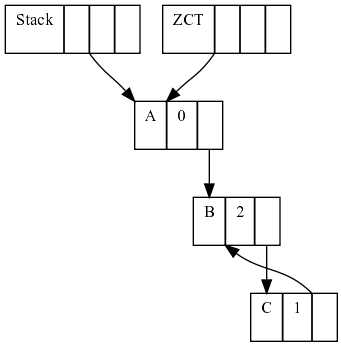

Let's see a simple example:

First, we have 3 heap cells, A pointed only by the (thus with rc 0 and added to the ZCT) and B pointed by A and in a cycle with C.

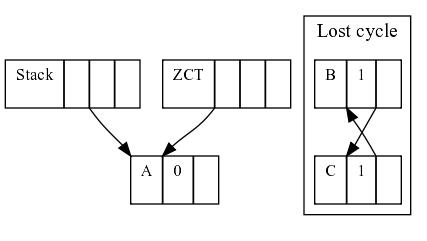

If sometime later, A stop pointing to B, the cycle B-C is not pointed by anything (the ZCT can't do anything about it either), so we lost track of the cycle.

Does this mean that deferred reference counting is useless? I think not. It could still be useful to do some kind of incremental garbage collection, minimizing pauses for a lot of cases. As long as the ZCT reconciliation can find free cells, the pauses of GC would be as short as tracing only the stack, which I think it would be pretty short.

Mental note

See how often cycles are found in tipical D programs.

If the ZCT reconciliation can't find free cells, a full collection should be triggered, using a tracing collector to inspect both the stack and the heap. Alternatively, one can a potential cycle table to store cells which rc has been decremented to a value higher than zero, and then just trace those cells to look for cycles, but we will see this algorithm in more detail in the future.

Avoiding counter updates

by Leandro Lucarella on 2008- 08- 25 03:44 (updated on 2008- 08- 25 03:44)- with 0 comment(s)

The main drawback of reference counting (leaving cycle aside) probably is high overhead it imposes into the client program. Every pointer update has to manipulate the reference counters, for both the old and the new objects.

Function calls

This includes every object passed as argument to a function, which one can guess it would be a lot (every method call for example). However, this kind of rc updates can be easily optimized away. Let's see an example:

class SomeClass

{

void some_method() {}

}

void some_function(SomeClass o)

{

o.some_method();

}

void main()

{

auto o = new SomeClass;

some_function(o);

}

It's clear that o should live until the end of main(), and that there is no chance o could be garbage collected until main() finishes. To express this, is enough to have o's rc = 1. There is no need to increment it when some_function() is called, nor when some_method() is called.

So, theoretically (I really didn't prove it =) is not necessary to update object's rc when used as arguments.

Local pointers update

What about pointers in the stack? Most of the time, pointers updates are done in local variables (pointers in the stack, not in the heap). The GC book talks about 99% of pointers update done in local variables for Lisp and ML. I don't think D could have that much but I guess it could be pretty high too.

Mental note

Gather some statistics about the number of local pointers update vs. heap pointers update in D

Fortunately Deutsch and Bobrow created an algorithm to completely ignore local pointers update, at the cost of relaying on some kind of tracing collector, but that only have to trace the stack (which should be pretty small compared to the heap).

What the algorithm proposes is to use simple assignment when updating local pointers. Pointers living in the heap manipulates rc as usual, but when the count drops to 0, the object is added to a zero count table (ZCT) (and removed if some pointer update increments the counter again).

Finally, at some point (usually when you run out of memory), the ZCT has to be reconciled, doing some simple steps: trace the stack looking for pointers and incrementing their counters and remove any object with rc = 0. Finally, decrement all the counters of the objects pointer to by the stack pointers.

This technique seems to be a good mix of both reference counting and tracing collectors: small pauses (the stack is usually small), low overhead for counter manipulation. The only missing point is cycles. At first sight, if we need a tracing collector for cycles, this algorithm seems pretty useless because you have to trace all the heap and stack to free cycles, so the optimization is lost. Big pauses are here again.

I have the feeling I'm missing something and the ZCT could be useful when comes to reclaim cycles, but I have to think a little more about that.